What Is AI-Ready Data?

At its core, AI-ready data is exactly what the name implies: data that’s ready for AI use. In the past, this typically meant aggregated warehouse data from a source such as Snowflake or Google BigQuery. Unfortunately, that’s proven false, as teams are wiring LLMs and agents into existing pipelines and finding that clean, warehoused data still produces incorrect answers, slow agents, and broken integrations.

The change isn't in the data; it's in who's consuming it. Agents spin up ephemerally mid-loop, and the systems they query are too static to keep up. AI-ready means data that an agent can access and reason about, rather than a meticulously tuned, human-curated pipeline upstream.

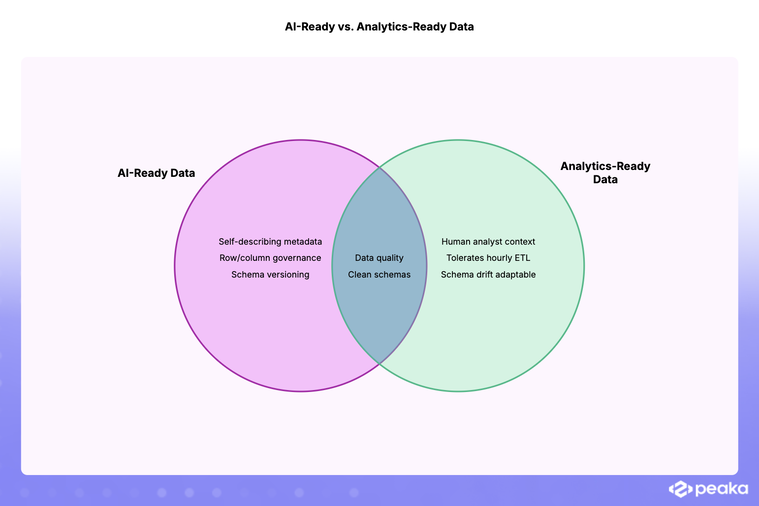

AI-ready vs. analytics-ready

It’s easy to conflate AI-ready data versus analytics-ready data. While they might share similar strategies, they are fundamentally different problems.

Analytics-ready data is shaped for human analysts who can ask in #data-help what flag_v2 means. AI-ready data has to be self-describing, like a queryable data catalog, because the consumer has no institutional memory.

There are some concrete contrasts between these data classes.

-

Latency: Hourly ETL is fine for analysts. An agent answering 'what's my balance?' needs the answer now.

-

Schema stability: Analysts adapt to schema drift, whereas prompts and agents silently stop working.

-

Access pattern: BI tools hit a warehouse, while agents need narrow, permissioned, query-time access across many systems.

-

Trust: An analyst can use judgment when facing an outlier; an LLM confabulates confidently on bad inputs.

The AI doesn't bring judgment, so the data has to carry the context.

The eight properties of AI-ready data

There are a few tenets that make data AI-ready, many of which are non-negotiable and all of which are necessary at scale. They reduce to the following buckets:

-

Accessible at query time. AI-ready data is reachable through a stable, documented interface — SQL, REST, or MCP — that doesn't require human refresh cycles.

-

Semantically described. AI-ready data has table/column descriptions, metric definitions, and entity meanings encoded as metadata in a semantic layer so the AI can read them at query time.

-

Fresh, or knowingly stale. AI-ready data either reflects the current source state or the staleness is explicit and machine-readable.

-

Governed at the row/column level. AI-ready data has row- and column-level security permissions enforced when the AI queries, not assumed because "the agent runs as a service account."

-

Joinable across sources. AI-ready data can combine data from multiple sources in a single query, such as Salesforce + Postgres + Stripe. That’s possible at runtime, without the need for days of engineering work.

-

Schema-stable or versioned. AI-ready data is dynamic but also versioned through data contracts so that changes don't silently brick the agent.

-

Auditable. Every AI-driven read is logged with who/what/when, providing data lineage so a bad agent action can be traced.

-

Right-shaped for the use case. AI-ready data is not a single shape. Data shapes need to be dynamic. RAG wants chunked and embedded text, analytical agents want tables, transactional agents want APIs.

These issues often cause developers to scramble to build a data warehouse or vector database that’ll address them all. However, that is the wrong approach.

Three common misconceptions about implementation

We believe there are three common miscommunications on implementation.

Myth: AI-ready means vector database

Vectors are right for certain use cases, specifically unstructured retrieval. That is, querying docs, transcripts, tickets, etc. They're incredibly inefficient for "what's the MRR of customer X?” That's a SQL question in a chatbot disguise. Most enterprise AI questions are structured-data questions in natural language. Forcing them through a vector DB results in hallucinations and higher latency.

Myth: AI-ready means replacing your data warehouse

Snowflake and BigQuery still earn their keep for analytics. They break down when treated as the only access layer for AI access, where all operational data is duplicated into yet another system, making it outdated, more expensive, and fragmenting governance. Agents additionally need live operational data (Salesforce, Stripe, Notion, prod Postgres) that a back-dated snapshot can't provide. The solution is a dynamic layer on top of your current systems, including your data warehouse, that facilitates real-time query federation without replacing any existing source.

Myth: Cleanliness is all that matters

A pristine Snowflake table with a column named attr_17 is useless to an LLM. Data must be clean, but that alone is not enough. The bar is clean, accessible, semantically described, and governed data. Most stacks possess one or two of these qualities; few have all four.

Data virtualization produces AI-ready data

One effective way to produce AI-ready data is data virtualization.

This method enables AI systems to seamlessly connect to a virtualized data layer that sits atop all your sources, including data warehouses such as Snowflake or BigQuery, and operational systems, rather than bypassing your existing infrastructure. The virtualized data layer integrates with primary data sources (Stripe, Postgres, Salesforce, your existing warehouse, etc.) and provides federated access at runtime.

This virtualized layer, meanwhile, queries the underlying sources and manages a data cache to balance speed with freshness. There is no ETL pipeline; data is synced automatically and on demand using a zero-copy approach, without delay.. The result is a single interface for every source, with the capacity to join data across sources.

Another advantage of a virtualized layer is that governance is immediate. The virtualized data layer will use the user’s credentials to access content, thereby avoiding unauthorized access through the pre-existing access control plane.

These advantages are exactly why we built Peaka. Peaka is a dynamic layer on top of your existing data sources—warehouses, operational systems, and SaaS tools alike—providing a single SQL interface with permissions and semantic context attached at query time.

Final thoughts: Checklist - is your data AI-ready?

To make things easy, consider the following questions. If more than two of these questions are answered with a no, then your data is not AI-ready.

-

Can an AI agent reach this data through a stable, documented interface?

-

Does the schema include human-readable descriptions of every table and column?

-

Are business metrics defined once, somewhere that the AI can read?

-

Are permissions enforced at query time rather than assumed?

-

Can the AI join this data with other systems without a multi-month integration?

-

Is every AI-driven read logged?

-

Does freshness match the use case?

-

Is there a schema/semantic contract, enforced by a schema registry, that the AI can rely on?

If you’re looking to make your data AI-ready without a data warehouse, book a demo with Peaka.

Please

fill out this field

Please

fill out this field